修改 Hadoop TeraSort演算法 —— 按照LongWritable型別的Key排序

近日,需要用ParMetis對大圖資料進行分割槽,其輸入是無向圖(鄰接表形式)且按照頂點ID排序,於是想到用Hadoop中的TeraSort演算法對無向圖進行排序。但Hadoop自帶TeraSort演算法是按照每行資料的前兩個字元排序的,不能滿足要求。

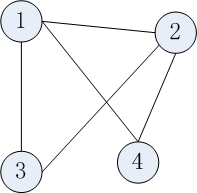

由於圖一般都是用鄰接表的形式儲存,改進的TeraSort演算法就是按照頂點ID進行排序,支援有向圖和無向圖,邊上可附加權值。下面以無向圖為例講述資料的輸入格式。對於下圖,

其輸入資料格式如下,以 \t 間隔,每行第一列為頂點ID。

1 2 3 4

3 1 2

2 1 3 4

4 1 2

擴充套件:只要每行的資料格式滿足:key+“\t”+value,其中key為int或long型,value型別任意。修改的TeraSort演算法就能按照key來對每一行進行排序。

修改方法:

1. 由於輸入格式變化,故首先修改TeraRecordReader類,主要是 boolean next(LongWritable key, Text value)方法,修改如何解析每行資料。程式碼如下:

public boolean next(LongWritable key, Text value) throws IOException {

if (in.next(junk, line)) {

String[] temp=line.toString().split("\t");

key.set(Long.parseLong(temp[0]));

if(temp.length!=1) {

value.set(line.toString().substring(temp[0].length()+1));

} else {

value.set("");

}

return true;

} else {

return false;

}

}3. 在原TeraSort演算法中,每個map task首先從分散式快取中讀取分割點,然後根據分割點簡歷2-Trie樹。map task從split中依次讀入每條資料,通過Trie樹查詢每條記錄所對應的reduce task編號。

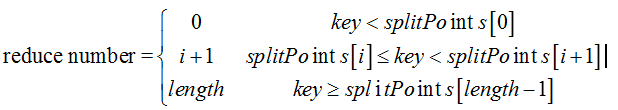

現在由於是Long型,則不需要構建Trie樹。已知分割點是儲存在splitPoints[]陣列中,按照如下公式計算reduce number,其中length等於splitPoints.length

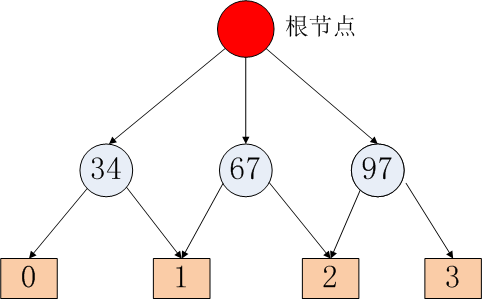

假設reduce task數目為4(由使用者設定),分割點為34、67、97。則分割點和reduce task編號的對映關係如下:

可以看到小於34的對應第0個reduce task,34和67之間的對應第一個reduce task,67和97之間的key對應第2個reduce task,大於等於97的則對應於第3個reduce task。

主要修改int getPartition(LongWritable key, Text value, int numPartitions)方法,如下:

@Override

public int getPartition(LongWritable key, Text value, int numPartitions) {

if(key.get()<splitPoints[0].get()) {

return 0;

}

for(int i=0;i<splitPoints.length-1;i++) {

if(key.get()>=splitPoints[i].get() && key.get()<splitPoints[i+1].get()) {

return i+1;

}

}

return splitPoints.length;

}

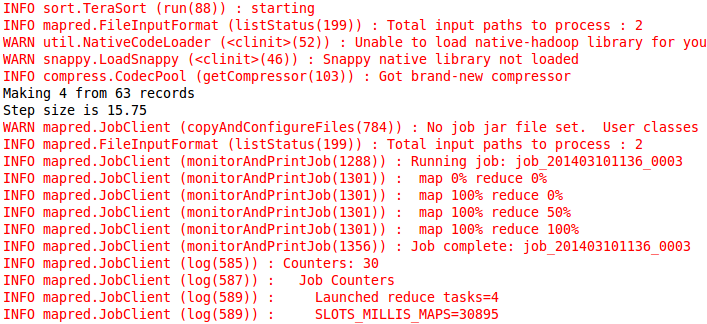

job.setOutputFormat(TextOutputFormat.class);5. 打成Jar包(TeraSort.jar),在叢集上執行即可。例如:hadoop jar TeraSort.jar TeraSortTest output 4

注意輸入引數為:<input> <output> <reduce number> 。與原TeraSort中用 -D mapred.reduce.tasks=value 不同,此處讓使用者明確指定reduce tash的數目。防止使用者忘寫的話,原TeraSort就啟動一個reduce task,那麼整個TeraSort演算法就失去意義! 執行結果如下:

6. 上述排好序的檔案,依次存在output資料夾下的:part-00000、part-00001、part-00002、part-00003。

使用 hadoop fs -getmerge output output-total 命令後,所有資料都會有序彙總到output-total檔案中。

getmerge會按照part-00000、part-00001、part-00002、part-00003的順序依次把每個檔案輸出到output-total檔案中。程式碼如下:

/** Copy all files in a directory to one output file (merge). */

public static boolean copyMerge(FileSystem srcFS, Path srcDir,

FileSystem dstFS, Path dstFile,

boolean deleteSource,

Configuration conf, String addString) throws IOException {

dstFile = checkDest(srcDir.getName(), dstFS, dstFile, false);

if (!srcFS.getFileStatus(srcDir).isDir())

return false;

OutputStream out = dstFS.create(dstFile);

try {

FileStatus contents[] = srcFS.listStatus(srcDir);

for (int i = 0; i < contents.length; i++) {

if (!contents[i].isDir()) {

InputStream in = srcFS.open(contents[i].getPath());

try {

IOUtils.copyBytes(in, out, conf, false);

if (addString!=null)

out.write(addString.getBytes("UTF-8"));

} finally {

in.close();

}

}

}

} finally {

out.close();

}part-00000、part-00001、part-00002、part-00003的訪問順序是由namenode獲取的,對應其inode節點。

1. TeraInputFormat.java

/**

* Licensed to the Apache Software Foundation (ASF) under one

* or more contributor license agreements. See the NOTICE file

* distributed with this work for additional information

* regarding copyright ownership. The ASF licenses this file

* to you under the Apache License, Version 2.0 (the

* "License"); you may not use this file except in compliance

* with the License. You may obtain a copy of the License at

*

* http://www.apache.org/licenses/LICENSE-2.0

*

* Unless required by applicable law or agreed to in writing, software

* distributed under the License is distributed on an "AS IS" BASIS,

* WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

* See the License for the specific language governing permissions and

* limitations under the License.

*/

package com.undirected.graph.sort;

import java.io.IOException;

import java.util.ArrayList;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.SequenceFile;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapred.FileInputFormat;

import org.apache.hadoop.mapred.FileSplit;

import org.apache.hadoop.mapred.InputSplit;

import org.apache.hadoop.mapred.JobConf;

import org.apache.hadoop.mapred.LineRecordReader;

import org.apache.hadoop.mapred.RecordReader;

import org.apache.hadoop.mapred.Reporter;

import org.apache.hadoop.util.IndexedSortable;

import org.apache.hadoop.util.QuickSort;

/**

* An input format that reads the first 10 characters of each line as the key

* and the rest of the line as the value. Both key and value are represented

* as Text.

*/

public class TeraInputFormat extends FileInputFormat<LongWritable,Text> {

static final String PARTITION_FILENAME = "_partition.lst";

static final String SAMPLE_SIZE = "terasort.partitions.sample";

private static JobConf lastConf = null;

private static InputSplit[] lastResult = null;

static class TextSampler implements IndexedSortable {

private ArrayList<LongWritable> records = new ArrayList<LongWritable>();

public int compare(int i, int j) {

LongWritable left = records.get(i);

LongWritable right = records.get(j);

return left.compareTo(right);

}

public void swap(int i, int j) {

LongWritable left = records.get(i);

LongWritable right = records.get(j);

records.set(j, left);

records.set(i, right);

}

public void addKey(LongWritable key) {

records.add(key);

}

/**

* Find the split points for a given sample. The sample keys are sorted

* and down sampled to find even split points for the partitions. The

* returned keys should be the start of their respective partitions.

* @param numPartitions the desired number of partitions

* @return an array of size numPartitions - 1 that holds the split points

*/

LongWritable[] createPartitions(int numPartitions) {

int numRecords = records.size();

System.out.println("Making " + numPartitions + " from " + numRecords +

" records");

if (numPartitions > numRecords) {

throw new IllegalArgumentException

("Requested more partitions than input keys (" + numPartitions +

" > " + numRecords + ")");

}

new QuickSort().sort(this, 0, records.size());

float stepSize = numRecords / (float) numPartitions;

System.out.println("Step size is " + stepSize);

LongWritable[] result = new LongWritable[numPartitions-1];

for(int i=1; i < numPartitions; ++i) {

result[i-1] = records.get(Math.round(stepSize * i));

}

// System.out.println("result :"+Arrays.toString(result));

return result;

}

}

/**

* Use the input splits to take samples of the input and generate sample

* keys. By default reads 100,000 keys from 10 locations in the input, sorts

* them and picks N-1 keys to generate N equally sized partitions.

* @param conf the job to sample

* @param partFile where to write the output file to

* @throws IOException if something goes wrong

*/

public static void writePartitionFile(JobConf conf,

Path partFile) throws IOException {

TeraInputFormat inFormat = new TeraInputFormat();

TextSampler sampler = new TextSampler();

LongWritable key = new LongWritable();

Text value = new Text();

int partitions = conf.getNumReduceTasks();

long sampleSize = conf.getLong(SAMPLE_SIZE, 100000);

InputSplit[] splits = inFormat.getSplits(conf, conf.getNumMapTasks());

int samples = Math.min(10, splits.length);

long recordsPerSample = sampleSize / samples;

int sampleStep = splits.length / samples;

long records = 0;

// take N samples from different parts of the input

for(int i=0; i < samples; ++i) {

RecordReader<LongWritable,Text> reader =

inFormat.getRecordReader(splits[sampleStep * i], conf, null);

while (reader.next(key, value)) {

sampler.addKey(key);

key=new LongWritable();

records += 1;

if ((i+1) * recordsPerSample <= records) {

break;

}

}

}

FileSystem outFs = partFile.getFileSystem(conf);

if (outFs.exists(partFile)) {

outFs.delete(partFile, false);

}

SequenceFile.Writer writer =

SequenceFile.createWriter(outFs, conf, partFile, LongWritable.class,

NullWritable.class);

NullWritable nullValue = NullWritable.get();

for(LongWritable split : sampler.createPartitions(partitions)) {

writer.append(split, nullValue);

}

writer.close();

}

static class TeraRecordReader implements RecordReader<LongWritable,Text> {

private LineRecordReader in;

private LongWritable junk = new LongWritable();

private Text line = new Text();

public TeraRecordReader(Configuration job,

FileSplit split) throws IOException {

in = new LineRecordReader(job, split);

}

public void close() throws IOException {

in.close();

}

public LongWritable createKey() {

return new LongWritable();

}

public Text createValue() {

return new Text();

}

public long getPos() throws IOException {

return in.getPos();

}

public float getProgress() throws IOException {

return in.getProgress();

}

public boolean next(LongWritable key, Text value) throws IOException {

if (in.next(junk, line)) {

String[] temp=line.toString().split("\t");

key.set(Long.parseLong(temp[0]));

if(temp.length!=1) {

value.set(line.toString().substring(temp[0].length()+1));

} else {

value.set("");

}

return true;

} else {

return false;

}

}

}

@Override

public RecordReader<LongWritable, Text>

getRecordReader(InputSplit split,

JobConf job,

Reporter reporter) throws IOException {

return new TeraRecordReader(job, (FileSplit) split);

}

@Override

public InputSplit[] getSplits(JobConf conf, int splits) throws IOException {

if (conf == lastConf) {

return lastResult;

}

lastConf = conf;

lastResult = super.getSplits(conf, splits);

return lastResult;

}

}

2. TeraSort.java

package com.undirected.graph.sort;

import java.io.IOException;

import java.net.URI;

import java.util.ArrayList;

import java.util.List;

import org.apache.commons.logging.Log;

import org.apache.commons.logging.LogFactory;

import org.apache.hadoop.conf.Configured;

import org.apache.hadoop.filecache.DistributedCache;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.SequenceFile;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapred.FileOutputFormat;

import org.apache.hadoop.mapred.JobClient;

import org.apache.hadoop.mapred.JobConf;

import org.apache.hadoop.mapred.Partitioner;

import org.apache.hadoop.mapred.TextOutputFormat;

import org.apache.hadoop.util.Tool;

import org.apache.hadoop.util.ToolRunner;

public class TeraSort extends Configured implements Tool{

private static final Log LOG = LogFactory.getLog(TeraSort.class);

/**

* A partitioner that splits text keys into roughly equal partitions

* in a global sorted order.

*/

static class TotalOrderPartitioner implements Partitioner<LongWritable,Text>{

private LongWritable[] splitPoints;

/**

* Read the cut points from the given sequence file.

* @param fs the file system

* @param p the path to read

* @param job the job config

* @return the strings to split the partitions on

* @throws IOException

*/

private static LongWritable[] readPartitions(FileSystem fs, Path p,

JobConf job) throws IOException {

SequenceFile.Reader reader = new SequenceFile.Reader(fs, p, job);

List<LongWritable> parts = new ArrayList<LongWritable>();

LongWritable key = new LongWritable();

NullWritable value = NullWritable.get();

while (reader.next(key, value)) {

parts.add(key);

key = new LongWritable();

}

reader.close();

return parts.toArray(new LongWritable[parts.size()]);

}

@Override

public void configure(JobConf job) {

try {

FileSystem fs = FileSystem.getLocal(job);

Path partFile = new Path(TeraInputFormat.PARTITION_FILENAME);

splitPoints = readPartitions(fs, partFile, job);

} catch (IOException ie) {

throw new IllegalArgumentException("can't read paritions file", ie);

}

}

@Override

public int getPartition(LongWritable key, Text value, int numPartitions) {

if(key.get()<splitPoints[0].get()) {

return 0;

}

for(int i=0;i<splitPoints.length-1;i++) {

if(key.get()>=splitPoints[i].get() && key.get()<splitPoints[i+1].get()) {

return i+1;

}

}

return splitPoints.length;

}

}

@Override

public int run(String[] args) throws Exception {

LOG.info("starting");

JobConf job = (JobConf) getConf();

Path inputDir = new Path(args[0]);

inputDir = inputDir.makeQualified(inputDir.getFileSystem(job));

Path partitionFile = new Path(inputDir, TeraInputFormat.PARTITION_FILENAME);

URI partitionUri = new URI(partitionFile.toString() +

"#" + TeraInputFormat.PARTITION_FILENAME);

TeraInputFormat.setInputPaths(job, new Path(args[0]));

FileOutputFormat.setOutputPath(job, new Path(args[1]));

job.setNumReduceTasks(Integer.parseInt(args[2]));

job.setJobName("TeraSort");

job.setJarByClass(TeraSort.class);

job.setOutputKeyClass(LongWritable.class);

job.setOutputValueClass(Text.class);

job.setInputFormat(TeraInputFormat.class);

job.setOutputFormat(TextOutputFormat.class);

job.setPartitionerClass(TotalOrderPartitioner.class);

TeraInputFormat.writePartitionFile(job, partitionFile);

DistributedCache.addCacheFile(partitionUri, job);

DistributedCache.createSymlink(job);

job.setInt("dfs.replication", 1);

JobClient.runJob(job);

LOG.info("done");

return 0;

}

/**

* @param args

*/

public static void main(String[] args) throws Exception {

if(args.length<3) {

System.out.println("Usage:<input> <output> <reduce number>");

System.exit(-1);

}

int res = ToolRunner.run(new JobConf(), new TeraSort(), args);

System.exit(res);

}

}

相關文章

- hadoop的terasort排序總結Hadoop排序

- Hadoop-MapReduce-TeraSort-大資料排序例子Hadoop大資料排序

- Hadoop TerasortHadoop

- Map按照key和value進行排序排序

- Python dict sort排序 按照key,valuePython排序

- Hadoop的TeraSort問題Hadoop

- Hadoop測試TeraSortHadoop

- mysql 字串型別的數值欄位按照數值的大小進行排序MySql字串型別排序

- Hadoop學習筆記之TeraSort修改後輸出翻倍異常Hadoop筆記

- go slice/map型別 排序(選擇排序演算法)Go型別排序演算法

- 如何證明INNODB輔助索引葉子結點KEY值相同的按照PRIMARY KEY排序索引排序

- 測試眼裡的Hadoop系列 之TerasortHadoop

- Hadoop TeraSort演算法之2-trie樹構造時間解惑Hadoop演算法

- Js實現Object按照值的某個欄位(數值型別)的大小進行排序JSObject型別排序

- Hadoop TeraSort 基準測試實驗Hadoop

- Hadoop學習筆記 - Sort / TeraSort / TestDFSIOHadoop筆記

- hadoop基準測試_Hadoop TeraSort基準測試Hadoop

- 按照價格排序!排序

- MySQL 按照指定的欄位排序MySql排序

- Sqoop splitkey支援的型別OOP型別

- 2. TeraSort在Hadoop分散式叢集中的執行Hadoop分散式

- Flutter Key的原理和使用(三) LocalKey的三種型別Flutter型別

- 修改表的欄位型別型別

- zabbix agent 型別所有key(19)型別

- 讓 排序 按照 in 列表的的顯示順序排序輸出。排序

- C# 泛型集合的自定義型別排序C#泛型型別排序

- CDM修改資料型別的方法資料型別

- oracle 修改欄位型別的方法Oracle型別

- 按照NSArray內部的某個物件排序物件排序

- dedecms標籤按照權重排序排序

- js 漢字按照拼音排序效果JS排序

- sort按照數值大小排序排序

- 氣泡排序和引用型別排序型別

- 修改欄位資料型別的方法資料型別

- Spark 與 Hadoop 關於 TeraGen/TeraSort 的對比實驗(包含原始碼)SparkHadoop原始碼

- thinkphp where in order 按照順序in的迴圈排序PHP排序

- PHP 字串陣列按照拼音排序的問題PHP字串陣列排序

- 快速掌握Java幾種排序演算法的區別與排序演算法的應用Java排序演算法