Kafka入門例項

摘要:本文主要講了Kafka的一個簡單入門例項

原始碼下載:https://github.com/appleappleapple/BigDataLearning

kafka安裝過程看這裡:Kafka在Windows安裝執行

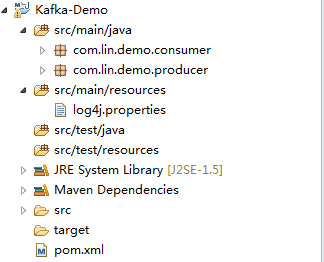

整個工程目錄如下:

1、pom檔案

<project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>com.lin</groupId>

<artifactId>Kafka-Demo</artifactId>

<version>0.0.1-SNAPSHOT</version>

<dependencies>

<dependency>

<groupId>org.apache.kafka</groupId>

<artifactId>kafka_2.10</artifactId>

<version>0.9.0.0</version>

</dependency>

<dependency>

<groupId>org.opentsdb</groupId>

<artifactId>java-client</artifactId>

<version>2.1.0-SNAPSHOT</version>

<exclusions>

<exclusion>

<groupId>org.slf4j</groupId>

<artifactId>slf4j-log4j12</artifactId>

</exclusion>

<exclusion>

<groupId>log4j</groupId>

<artifactId>log4j</artifactId>

</exclusion>

<exclusion>

<groupId>org.slf4j</groupId>

<artifactId>jcl-over-slf4j</artifactId>

</exclusion>

</exclusions>

</dependency>

<dependency>

<groupId>com.alibaba</groupId>

<artifactId>fastjson</artifactId>

<version>1.2.4</version>

</dependency>

</dependencies>

</project>2、生產者

package com.lin.demo.producer;

import java.util.Properties;

import kafka.javaapi.producer.Producer;

import kafka.producer.KeyedMessage;

import kafka.producer.ProducerConfig;

public class KafkaProducer {

private final Producer<String, String> producer;

public final static String TOPIC = "linlin";

private KafkaProducer() {

Properties props = new Properties();

// 此處配置的是kafka的埠

props.put("metadata.broker.list", "127.0.0.1:9092");

props.put("zk.connect", "127.0.0.1:2181");

// 配置value的序列化類

props.put("serializer.class", "kafka.serializer.StringEncoder");

// 配置key的序列化類

props.put("key.serializer.class", "kafka.serializer.StringEncoder");

props.put("request.required.acks", "-1");

producer = new Producer<String, String>(new ProducerConfig(props));

}

void produce() {

int messageNo = 1000;

final int COUNT = 10000;

while (messageNo < COUNT) {

String key = String.valueOf(messageNo);

String data = "hello kafka message " + key;

producer.send(new KeyedMessage<String, String>(TOPIC, key, data));

System.out.println(data);

messageNo++;

}

}

public static void main(String[] args) {

new KafkaProducer().produce();

}

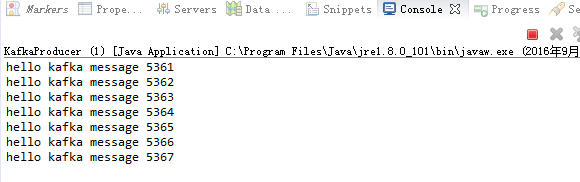

}右鍵:run as java application

執行結果:

3、消費者

package com.lin.demo.consumer;

import java.util.HashMap;

import java.util.List;

import java.util.Map;

import java.util.Properties;

import kafka.consumer.ConsumerConfig;

import kafka.consumer.ConsumerIterator;

import kafka.consumer.KafkaStream;

import kafka.javaapi.consumer.ConsumerConnector;

import kafka.serializer.StringDecoder;

import kafka.utils.VerifiableProperties;

import com.lin.demo.producer.KafkaProducer;

public class KafkaConsumer {

private final ConsumerConnector consumer;

private KafkaConsumer() {

Properties props = new Properties();

// zookeeper 配置

props.put("zookeeper.connect", "127.0.0.1:2181");

// group 代表一個消費組

props.put("group.id", "lingroup");

// zk連線超時

props.put("zookeeper.session.timeout.ms", "4000");

props.put("zookeeper.sync.time.ms", "200");

props.put("rebalance.max.retries", "5");

props.put("rebalance.backoff.ms", "1200");

props.put("auto.commit.interval.ms", "1000");

props.put("auto.offset.reset", "smallest");

// 序列化類

props.put("serializer.class", "kafka.serializer.StringEncoder");

ConsumerConfig config = new ConsumerConfig(props);

consumer = kafka.consumer.Consumer.createJavaConsumerConnector(config);

}

void consume() {

Map<String, Integer> topicCountMap = new HashMap<String, Integer>();

topicCountMap.put(KafkaProducer.TOPIC, new Integer(1));

StringDecoder keyDecoder = new StringDecoder(new VerifiableProperties());

StringDecoder valueDecoder = new StringDecoder(new VerifiableProperties());

Map<String, List<KafkaStream<String, String>>> consumerMap = consumer.createMessageStreams(topicCountMap, keyDecoder, valueDecoder);

KafkaStream<String, String> stream = consumerMap.get(KafkaProducer.TOPIC).get(0);

ConsumerIterator<String, String> it = stream.iterator();

while (it.hasNext())

System.out.println("<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<" + it.next().message() + "<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<");

}

public static void main(String[] args) {

new KafkaConsumer().consume();

}

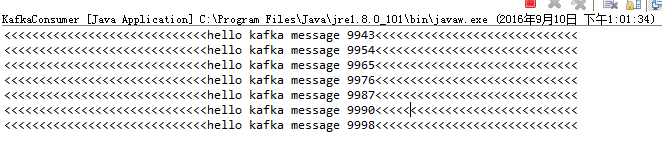

}執行結果:

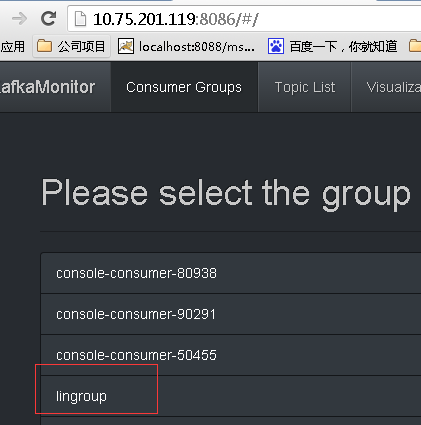

監控頁面

相關文章

- SoapUI入門例項UI

- TypeScript入門例項TypeScript

- Websocet 入門例項Web

- Flutter 入門例項Flutter

- Web Components 入門例項教程Web

- Vue專案入門例項Vue

- opengl簡單入門例項

- Django+MySQL 例項入門DjangoMySql

- MyBatis基於Maven入門例項MyBatisMaven

- Python——astroplan庫入門例項(二)PythonAST

- React 入門-寫個 TodoList 例項React

- 【Oracle】ASM例項安裝入門OracleASM

- Kafka效能測試例項Kafka

- Python入門基礎知識例項,Python

- Kafka 入門Kafka

- KafKa Java程式設計例項KafkaJava程式設計

- ActiveMQ入門系列二:入門程式碼例項(點對點模式)MQ模式

- 雲容器例項服務入門必讀

- iOS架構入門 - MVC模式例項演示iOS架構MVC模式

- 超級簡單入門vuex 小例項Vue

- SpringMVC 框架系列之初識與入門例項SpringMVC框架

- kafka入門案例Kafka

- Apache Kafka教程--Kafka新手入門ApacheKafka

- Python 入門之經典函式例項(二)Python函式

- react-dva學習 --- 用例項來入門React

- vue入門筆記體系(一)vue例項Vue筆記

- Kafka基礎入門Kafka

- kafka(docker) 入門分享KafkaDocker

- Kafka入門(1):概述Kafka

- Kafka簡單入門Kafka

- kafka從入門到關門Kafka

- unity2019 ECS入門例項:建立一個EntityUnity

- Hibernate基於Maven入門例項,與MyBatis比對MavenMyBatis

- [翻譯]返回導向程式設計例項入門程式設計

- Vue入門指南-01建立vue例項 (快速上手vue)Vue

- Android外掛化快速入門與例項解析(VirtualApk)AndroidAPK

- 24 個例項入門並掌握「Webpack4」(二)Web

- 24 個例項入門並掌握「Webpack4」(三)Web

- 24 個例項入門並掌握「Webpack4」(一)Web