kafka java示例

jdk版本是:1.6.0_20

http://kafka.apache.org/07/quickstart.html官方給的示例並不是很完整,以下程式碼是經過我補充的並且編譯後能執行的。

Producer Code

- import java.util.*;

- import kafka.message.Message;

- import kafka.producer.ProducerConfig;

- import kafka.javaapi.producer.Producer;

- import kafka.javaapi.producer.ProducerData;

- public class ProducerSample {

- public static void main(String[] args) {

- ProducerSample ps = new ProducerSample();

- Properties props = new Properties();

- props.put("zk.connect", "127.0.0.1:2181");

- props.put("serializer.class", "kafka.serializer.StringEncoder");

- ProducerConfig config = new ProducerConfig(props);

- Producer<String, String> producer = new Producer<String, String>(config);

- ProducerData<String, String> data = new ProducerData<String, String>("test-topic", "test-message2");

- producer.send(data);

- producer.close();

- }

- }

Consumer Code

- import java.nio.ByteBuffer;

- import java.util.HashMap;

- import java.util.List;

- import java.util.Map;

- import java.util.Properties;

- import java.util.concurrent.ExecutorService;

- import java.util.concurrent.Executors;

- import kafka.consumer.Consumer;

- import kafka.consumer.ConsumerConfig;

- import kafka.consumer.KafkaStream;

- import kafka.javaapi.consumer.ConsumerConnector;

- import kafka.message.Message;

- import kafka.message.MessageAndMetadata;

- public class ConsumerSample {

- public static void main(String[] args) {

- // specify some consumer properties

- Properties props = new Properties();

- props.put("zk.connect", "localhost:2181");

- props.put("zk.connectiontimeout.ms", "1000000");

- props.put("groupid", "test_group");

- // Create the connection to the cluster

- ConsumerConfig consumerConfig = new ConsumerConfig(props);

- ConsumerConnector consumerConnector = Consumer.createJavaConsumerConnector(consumerConfig);

- // create 4 partitions of the stream for topic “test-topic”, to allow 4 threads to consume

- HashMap<String, Integer> map = new HashMap<String, Integer>();

- map.put("test-topic", 4);

- Map<String, List<KafkaStream<Message>>> topicMessageStreams =

- consumerConnector.createMessageStreams(map);

- List<KafkaStream<Message>> streams = topicMessageStreams.get("test-topic");

- // create list of 4 threads to consume from each of the partitions

- ExecutorService executor = Executors.newFixedThreadPool(4);

- // consume the messages in the threads

- for (final KafkaStream<Message> stream : streams) {

- executor.submit(new Runnable() {

- public void run() {

- for (MessageAndMetadata msgAndMetadata : stream) {

- // process message (msgAndMetadata.message())

- System.out.println("topic: " + msgAndMetadata.topic());

- Message message = (Message) msgAndMetadata.message();

- ByteBuffer buffer = message.payload();

- <span style="white-space:pre"> </span>byte[] bytes = new byte[message.payloadSize()];

- buffer.get(bytes);

- String tmp = new String(bytes);

- System.out.println("message content: " + tmp);

- }

- }

- });

- }

- }

- }

分別啟動zookeeper,kafka server之後,依次執行Producer,Consumer的程式碼

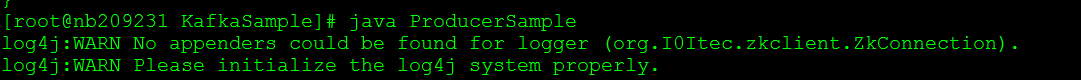

執行ProducerSample:

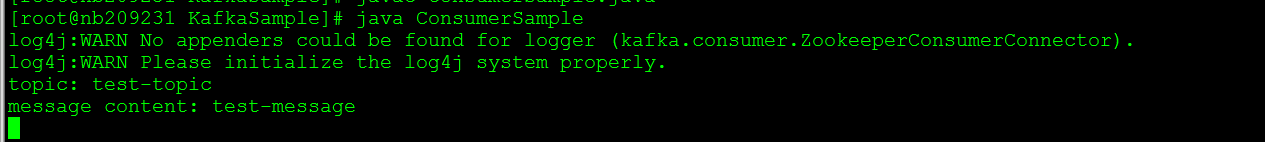

執行ConsumerSample:

由於本人不熟悉java的多執行緒,將官方給的Consumer Code做點小改動,如下所示:

- import java.nio.ByteBuffer;

- import java.util.HashMap;

- import java.util.List;

- import java.util.Map;

- import java.util.Properties;

- import kafka.consumer.Consumer;

- import kafka.consumer.ConsumerConfig;

- import kafka.consumer.KafkaStream;

- import kafka.javaapi.consumer.ConsumerConnector;

- import kafka.message.Message;

- import kafka.message.MessageAndMetadata;

- public class ConsumerSample2 {

- public static void main(String[] args) {

- // specify some consumer properties

- Properties props = new Properties();

- props.put("zk.connect", "localhost:2181");

- props.put("zk.connectiontimeout.ms", "1000000");

- props.put("groupid", "test_group");

- // Create the connection to the cluster

- ConsumerConfig consumerConfig = new ConsumerConfig(props);

- ConsumerConnector consumerConnector = Consumer.createJavaConsumerConnector(consumerConfig);

- HashMap<String, Integer> map = new HashMap<String, Integer>();

- map.put("test-topic", 1);

- Map<String, List<KafkaStream<Message>>> topicMessageStreams =

- consumerConnector.createMessageStreams(map);

- List<KafkaStream<Message>> streams = topicMessageStreams.get("test-topic");

- <strong>for (final KafkaStream<Message> stream : streams) {

- for (MessageAndMetadata msgAndMetadata : stream) {

- // process message (msgAndMetadata.message())

- System.out.println("topic: " + msgAndMetadata.topic());

- Message message = (Message) msgAndMetadata.message();

- ByteBuffer buffer = message.payload();

- byte[] bytes = new byte[message.payloadSize()];

- buffer.get(bytes);

- String tmp = new String(bytes);

- System.out.println("message content: " + tmp);

- }

- }</strong>

- }

- }

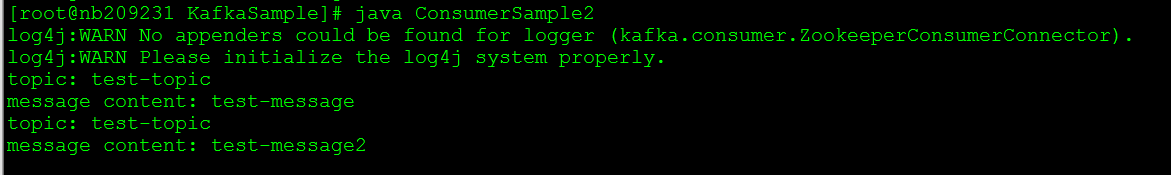

我在Producer端又傳送了一條“test-message2”的訊息,Consumer收到了兩條訊息,如下所示:

kafka作為分散式日誌收集或系統監控服務,我們有必要在合適的場合使用它。kafka的部署包括zookeeper環境/kafka環境,同時還需要進行一些配置操作.接下來介紹如何使用kafka.

我們使用3個zookeeper例項構建zk叢集,使用2個kafka broker構建kafka叢集.

其中kafka為0.8V,zookeeper為3.4.5V

一.Zookeeper叢集構建

我們有3個zk例項,分別為zk-0,zk-1,zk-2;如果你僅僅是測試使用,可以使用1個zk例項.

1) zk-0

調整配置檔案:

- clientPort=2181

- server.0=127.0.0.1:2888:3888

- server.1=127.0.0.1:2889:3889

- server.2=127.0.0.1:2890:3890

- ##只需要修改上述配置,其他配置保留預設值

啟動zookeeper

- ./zkServer.sh start

2) zk-1

調整配置檔案(其他配置和zk-0一隻):

- clientPort=2182

- ##只需要修改上述配置,其他配置保留預設值

啟動zookeeper

- ./zkServer.sh start

3) zk-2

調整配置檔案(其他配置和zk-0一隻):

- clientPort=2183

- ##只需要修改上述配置,其他配置保留預設值

啟動zookeeper

- ./zkServer.sh start

二. Kafka叢集構建

因為Broker配置檔案涉及到zookeeper的相關約定,因此我們先展示broker配置檔案.我們使用2個kafka broker來構建這個叢集環境,分別為kafka-0,kafka-1.

1) kafka-0

在config目錄下修改配置檔案為:

- broker.id=0

- port=9092

- num.network.threads=2

- num.io.threads=2

- socket.send.buffer.bytes=1048576

- socket.receive.buffer.bytes=1048576

- socket.request.max.bytes=104857600

- log.dir=./logs

- num.partitions=2

- log.flush.interval.messages=10000

- log.flush.interval.ms=1000

- log.retention.hours=168

- #log.retention.bytes=1073741824

- log.segment.bytes=536870912

- ##replication機制,讓每個topic的partitions在kafka-cluster中備份2個

- ##用來提高cluster的容錯能力..

- default.replication.factor=1

- log.cleanup.interval.mins=10

- zookeeper.connect=127.0.0.1:2181,127.0.0.1:2182,127.0.0.1:2183

- zookeeper.connection.timeout.ms=1000000

因為kafka用scala語言編寫,因此執行kafka需要首先準備scala相關環境。

- > cd kafka-0

- > ./sbt update

- > ./sbt package

- > ./sbt assembly-package-dependency

其中最後一條指令執行有可能出現異常,暫且不管。 啟動kafka broker:

- > JMS_PORT=9997 bin/kafka-server-start.sh config/server.properties &

因為zookeeper環境已經正常執行了,我們無需通過kafka來掛載啟動zookeeper.如果你的一臺機器上部署了多個kafka broker,你需要宣告JMS_PORT.

2) kafka-1

- broker.id=1

- port=9093

- ##其他配置和kafka-0保持一致

然後和kafka-0一樣執行打包命令,然後啟動此broker.

- > JMS_PORT=9998 bin/kafka-server-start.sh config/server.properties &

仍然可以通過如下指令檢視topic的"partition"/"replicas"的分佈和存活情況.

- > bin/kafka-list-topic.sh --zookeeper localhost:2181

- topic: my-replicated-topic partition: 0 leader: 2 replicas: 1,2,0 isr: 2

- topic: test partition: 0 leader: 0 replicas: 0 isr: 0

到目前為止環境已經OK了,那我們就開始展示程式設計例項吧。[配置引數詳解]

三.專案準備

專案基於maven構建,不得不說kafka java客戶端實在是太糟糕了;構建環境會遇到很多麻煩。建議參考如下pom.xml;其中各個依賴包必須版本協調一致。如果kafka client的版本和kafka server的版本不一致,將會有很多異常,比如"broker id not exists"等;因為kafka從0.7升級到0.8之後(正名為2.8.0),client與server通訊的protocol已經改變.

- <dependencies>

- <dependency>

- <groupId>log4j</groupId>

- <artifactId>log4j</artifactId>

- <version>1.2.14</version>

- </dependency>

- <dependency>

- <groupId>org.apache.kafka</groupId>

- <artifactId>kafka_2.8.2</artifactId>

- <version>0.8.0</version>

- <exclusions>

- <exclusion>

- <groupId>log4j</groupId>

- <artifactId>log4j</artifactId>

- </exclusion>

- </exclusions>

- </dependency>

- <dependency>

- <groupId>org.scala-lang</groupId>

- <artifactId>scala-library</artifactId>

- <version>2.8.2</version>

- </dependency>

- <dependency>

- <groupId>com.yammer.metrics</groupId>

- <artifactId>metrics-core</artifactId>

- <version>2.2.0</version>

- </dependency>

- <dependency>

- <groupId>com.101tec</groupId>

- <artifactId>zkclient</artifactId>

- <version>0.3</version>

- </dependency>

- </dependencies>

四.Producer端程式碼

1) producer.properties檔案:此檔案放在/resources目錄下

- #partitioner.class=

- ##broker列表可以為kafka server的子集,因為producer需要從broker中獲取metadata

- ##儘管每個broker都可以提供metadata,此處還是建議,將所有broker都列舉出來

- metadata.broker.list=127.0.0.1:9092,127.0.0.1:9093

- ##,127.0.0.1:9093

- ##同步,建議為async

- producer.type=sync

- compression.codec=0

- serializer.class=kafka.serializer.StringEncoder

- ##在producer.type=async時有效

- #batch.num.messages=100

2) LogProducer.java程式碼樣例

- package com.test.kafka;

- import java.util.ArrayList;

- import java.util.Collection;

- import java.util.List;

- import java.util.Properties;

- import kafka.javaapi.producer.Producer;

- import kafka.producer.KeyedMessage;

- import kafka.producer.ProducerConfig;

- public class LogProducer {

- private Producer<String,String> inner;

- public LogProducer() throws Exception{

- Properties properties = new Properties();

- properties.load(ClassLoader.getSystemResourceAsStream("producer.properties"));

- ProducerConfig config = new ProducerConfig(properties);

- inner = new Producer<String, String>(config);

- }

- public void send(String topicName,String message) {

- if(topicName == null || message == null){

- return;

- }

- KeyedMessage<String, String> km = new KeyedMessage<String, String>(topicName,message);//如果具有多個partitions,請使用new KeyedMessage(String topicName,K key,V value).

- inner.send(km);

- }

- public void send(String topicName,Collection<String> messages) {

- if(topicName == null || messages == null){

- return;

- }

- if(messages.isEmpty()){

- return;

- }

- List<KeyedMessage<String, String>> kms = new ArrayList<KeyedMessage<String, String>>();

- for(String entry : messages){

- KeyedMessage<String, String> km = new KeyedMessage<String, String>(topicName,entry);

- kms.add(km);

- }

- inner.send(kms);

- }

- public void close(){

- inner.close();

- }

- /**

- * @param args

- */

- public static void main(String[] args) {

- LogProducer producer = null;

- try{

- producer = new LogProducer();

- int i=0;

- while(true){

- producer.send("test-topic", "this is a sample" + i);

- i++;

- Thread.sleep(2000);

- }

- }catch(Exception e){

- e.printStackTrace();

- }finally{

- if(producer != null){

- producer.close();

- }

- }

- }

- }

五.Consumer端

1) consumer.properties:檔案位於/resources目錄下

- zookeeper.connect=127.0.0.1:2181,127.0.0.1:2182,127.0.0.1:2183

- ##,127.0.0.1:2182,127.0.0.1:2183

- # timeout in ms for connecting to zookeeper

- zookeeper.connectiontimeout.ms=1000000

- #consumer group id

- group.id=test-group

- #consumer timeout

- #consumer.timeout.ms=5000

- auto.commit.enable=true

- auto.commit.interval.ms=60000

2) LogConsumer.java程式碼樣例

- package com.test.kafka;

- import java.util.HashMap;

- import java.util.List;

- import java.util.Map;

- import java.util.Properties;

- import java.util.concurrent.ExecutorService;

- import java.util.concurrent.Executors;

- import kafka.consumer.Consumer;

- import kafka.consumer.ConsumerConfig;

- import kafka.consumer.ConsumerIterator;

- import kafka.consumer.KafkaStream;

- import kafka.javaapi.consumer.ConsumerConnector;

- import kafka.message.MessageAndMetadata;

- public class LogConsumer {

- private ConsumerConfig config;

- private String topic;

- private int partitionsNum;

- private MessageExecutor executor;

- private ConsumerConnector connector;

- private ExecutorService threadPool;

- public LogConsumer(String topic,int partitionsNum,MessageExecutor executor) throws Exception{

- Properties properties = new Properties();

- properties.load(ClassLoader.getSystemResourceAsStream("consumer.properties"));

- config = new ConsumerConfig(properties);

- this.topic = topic;

- this.partitionsNum = partitionsNum;

- this.executor = executor;

- }

- public void start() throws Exception{

- connector = Consumer.createJavaConsumerConnector(config);

- Map<String,Integer> topics = new HashMap<String,Integer>();

- topics.put(topic, partitionsNum);

- Map<String, List<KafkaStream<byte[], byte[]>>> streams = connector.createMessageStreams(topics);

- List<KafkaStream<byte[], byte[]>> partitions = streams.get(topic);

- threadPool = Executors.newFixedThreadPool(partitionsNum);

- for(KafkaStream<byte[], byte[]> partition : partitions){

- threadPool.execute(new MessageRunner(partition));

- }

- }

- public void close(){

- try{

- threadPool.shutdownNow();

- }catch(Exception e){

- //

- }finally{

- connector.shutdown();

- }

- }

- class MessageRunner implements Runnable{

- private KafkaStream<byte[], byte[]> partition;

- MessageRunner(KafkaStream<byte[], byte[]> partition) {

- this.partition = partition;

- }

- public void run(){

- ConsumerIterator<byte[], byte[]> it = partition.iterator();

- while(it.hasNext()){

- //connector.commitOffsets();手動提交offset,當autocommit.enable=false時使用

- MessageAndMetadata<byte[],byte[]> item = it.next();

- System.out.println("partiton:" + item.partition());

- System.out.println("offset:" + item.offset());

- executor.execute(new String(item.message()));//UTF-8,注意異常

- }

- }

- }

- interface MessageExecutor {

- public void execute(String message);

- }

- /**

- * @param args

- */

- public static void main(String[] args) {

- LogConsumer consumer = null;

- try{

- MessageExecutor executor = new MessageExecutor() {

- public void execute(String message) {

- System.out.println(message);

- }

- };

- consumer = new LogConsumer("test-topic", 2, executor);

- consumer.start();

- }catch(Exception e){

- e.printStackTrace();

- }finally{

- // if(consumer != null){

- // consumer.close();

- // }

- }

- }

- }

需要提醒的是,上述LogConsumer類中,沒有太多的關注異常情況,必須在MessageExecutor.execute()方法中丟擲異常時的情況.

在測試時,建議優先啟動consumer,然後再啟動producer,這樣可以實時的觀測到最新的訊息。

相關文章

- FlinkSQL寫入Kafka/ES/MySQL示例-JAVAKafkaMySqlJava

- Kafka 1.0.0 d程式碼示例Kafka

- Kafka 1.0.0 多消費者示例Kafka

- Kafka簡單示例以及常用命令Kafka

- spark與kafaka整合workcount示例 spark-stream-kafkaSparkKafka

- Spring Boot與Kafka + kafdrop結合使用的簡單示例Spring BootKafka

- java SPI 程式碼示例Java

- Java NIO 程式碼示例Java

- Java NIO程式設計示例Java程式設計

- java 回撥函式示例Java函式

- java的kafka生產消費JavaKafka

- KafKa Java程式設計例項KafkaJava程式設計

- Java 多執行緒售票示例Java執行緒

- Java使用ObjectMapper的簡單示例JavaObjectAPP

- Java中的WeakHashMap與類示例JavaHashMap

- Java設計模式之外觀模式示例Java設計模式

- Java設計模式之策略模式示例Java設計模式

- 用Java拆分字串示例和技巧 -DreamixJava字串

- Spring Boot Crud操作示例 | Java Code GeeksSpring BootJava

- Java 8 lambda 表示式10個示例Java

- kafka_2.11-0.10.2.1 的生產者 消費者的示例(new producer api)KafkaAPI

- 插曲:Kafka原始碼預熱篇--- Java NIOKafka原始碼Java

- Kafka java api-生產者程式碼KafkaJavaAPI

- kafka java.rmi.server.ExportException: Port already in useKafkaJavaServerExportException

- ogg 同步kafka OGG-15051 Java or JNI exception:KafkaJavaException

- 關於Java異常的分類示例Java

- Java不用遞迴的迭代快速排序示例Java遞迴排序

- Java多執行緒——synchronized的使用示例Java執行緒synchronized

- Linux系統中KafKa安裝和使用方法 java客戶端連線kafkaLinuxKafkaJava客戶端

- 將CSV的資料傳送到kafka(java版)KafkaJava

- java8 – 新的時間日期API示例JavaAPI

- Java正規表示式的語法與示例Java

- 【Java面試】Kafka 怎麼避免重複消費Java面試Kafka

- kafka-ngx_kafka_moduleKafka

- Java使用代理進行網路連線方法示例Java

- Java JDK Proxy和CGLib動態代理示例講解JavaJDKCGLib

- Java代理機制分析——JDK代理(Proxy、InvocationHandler與示例)JavaJDK

- Java實現圖片轉字元輸出示例demoJava字元

- Java 10 var關鍵字詳解和示例教程Java