pyspark.sql.DataFrame與pandas.DataFrame之間的相互轉換

程式碼如下,步驟流程在程式碼註釋中可見:

# -*- coding: utf-8 -*-

import pandas as pd

from pyspark.sql import SparkSession

from pyspark.sql import SQLContext

from pyspark import SparkContext

#初始化資料

#初始化pandas DataFrame

df = pd.DataFrame([[1, 2, 3], [4, 5, 6]], index=['row1', 'row2'], columns=['c1', 'c2', 'c3'])

#列印資料

print df

#初始化spark DataFrame

sc = SparkContext()

if __name__ == "__main__":

spark = SparkSession\

.builder\

.appName("testDataFrame")\

.getOrCreate()

sentenceData = spark.createDataFrame([

(0.0, "I like Spark"),

(1.0, "Pandas is useful"),

(2.0, "They are coded by Python ")

], ["label", "sentence"])

#顯示資料

sentenceData.select("label").show()

#spark.DataFrame 轉換成 pandas.DataFrame

sqlContest = SQLContext(sc)

spark_df = sqlContest.createDataFrame(df)

#顯示資料

spark_df.select("c1").show()

# pandas.DataFrame 轉換成 spark.DataFrame

pandas_df = sentenceData.toPandas()

#列印資料

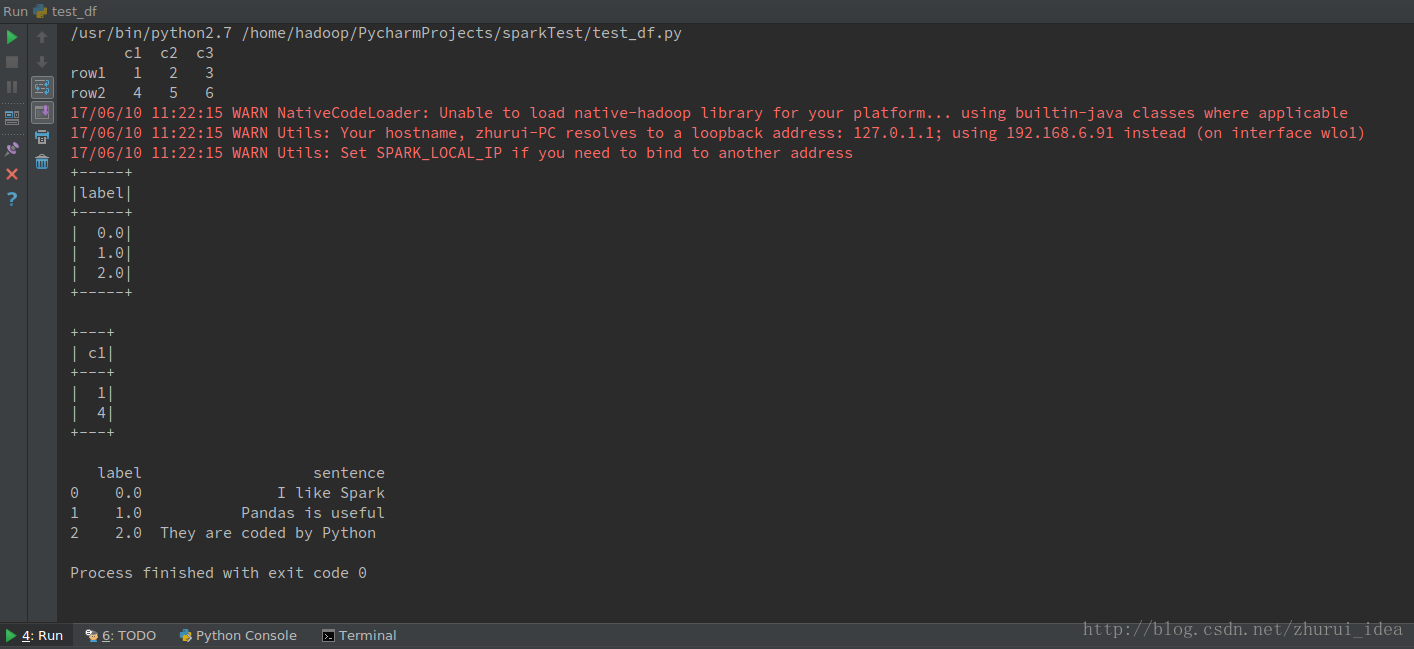

print pandas_df程式結果

相關文章

- mysql時間與字串之間相互轉換MySql字串

- spark: RDD與DataFrame之間的相互轉換Spark

- Apple開發_NSImage與CIImage之間的相互轉換APP

- android中String與InputStream之間的相互轉換方式Android

- js時間戳與日期格式的相互轉換JS時間戳

- java物件與json物件間的相互轉換Java物件JSON

- Android px、dp、sp之間相互轉換Android

- 時間有幾種格式、相互之間如何轉換?

- SCN 時間戳的相互轉換時間戳

- json字串和js物件之間相互轉換JSON字串物件

- XML與DataSet的相互轉換XML

- UIImage與Iplimage相互轉換UI

- SDOM與QDOM相互轉換

- DataTable與List相互轉換

- asp.net中DataTable和List之間相互轉換ASP.NET

- android byte[]陣列,bitmap,drawable之間的相互轉換Android陣列

- ascii碼與字元的相互轉換ASCII字元

- NSData與UIImage之間的轉換UI

- javascript時間戳和時間格式的相互轉換JavaScript時間戳

- java 物件與xml相互轉換Java物件XML

- Golang 陣列和字串之間的相互轉換[]byte/stringGolang陣列字串

- Python 實現Excel和TXT文字格式之間的相互轉換PythonExcel

- Hive日期、時間轉換:YYYY-MM-DD與YYYYMMDD;hh.mm.ss與hhmmss的相互轉換HiveHMM

- xml與陣列的相互轉換——phpXML陣列PHP

- DOM物件與jquery物件的相互轉換物件jQuery

- jQuery物件與Dom物件的相互轉換jQuery物件

- ANSI與UTF8之間的轉換!std::string與UTF8之間的轉換

- SQL Server 字串和時間相互轉換SQLServer字串

- 陣列與字串方法與相互轉換陣列字串

- 使用boost庫處理 int 、float、string之間相互轉換

- 旋轉矩陣與尤拉角的相互轉換矩陣

- Word文件與WPS文件的相互轉換(轉)

- Python時間戳的使用和相互轉換Python時間戳

- [轉] jQuery物件與DOM物件之間的轉換jQuery物件

- string與數字相互轉換

- JSON字串與HashMap相互轉換JSON字串HashMap

- java 字串與檔案相互轉換Java字串

- pandas中dataframe與dict相互轉換